BD15035 is An Ultra-Low-Power AI Accelerator Chip for Edge-AI Applications

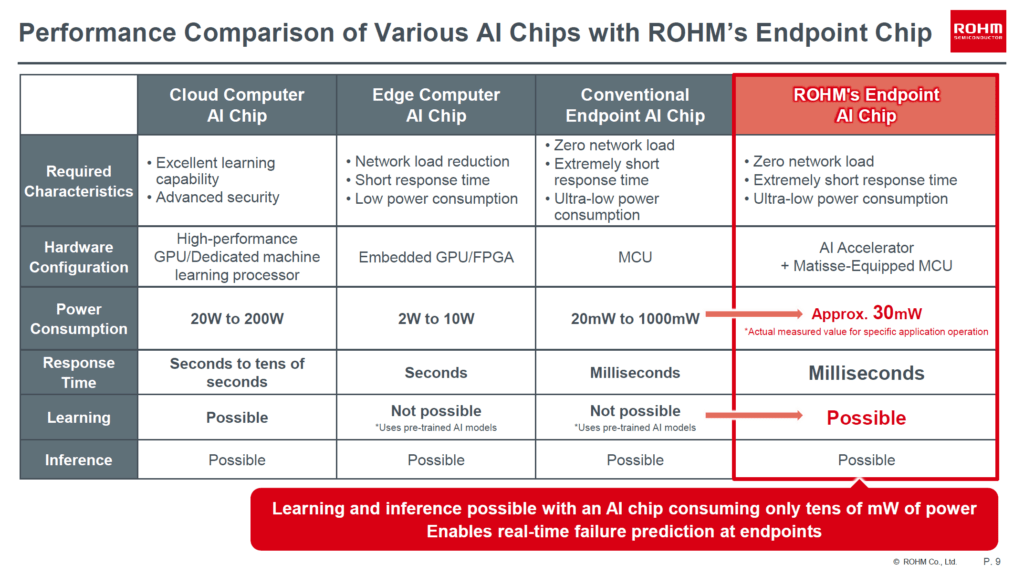

ROHM recently announced its new AI accelerator chip BD15035 for edge-AI applications. The chip has an ultra-low-power 3-layer Neural Network and a high-performance 8-bit MCU core that only requires a few tens of milliwatts to function.

There is a growing demand for products tailored for Artificial Intelligence (AI) applications at the edge. With the increasing adoption of highly-modular designs in both software and hardware to deal with the increasing complexity, each component of a product can now collect large amounts of data and produce only the most relevant and actionable information. An example is a smart modular sensor that collects real-time data from its environment, runs complex algorithms, and produces an output relevant to make an immediate decision. But one caveat of such applications is the limited power available to the devices from a battery. AI algorithms can be computationally intensive and power-demanding. Therefore reducing the power consumption of edge-AI devices is an area of active research. Now, ROHM, a Japanese semiconductor company has come up with an Artificial Intelligence chip, BD15035 that consumes only a few milliwatts and has on-device learning capability for edge processing and real-time failure prediction without requiring a cloud server. Low-power hardware when combined with efficient AI software cores can help us build smaller and smarter edge AI devices.

BD15035

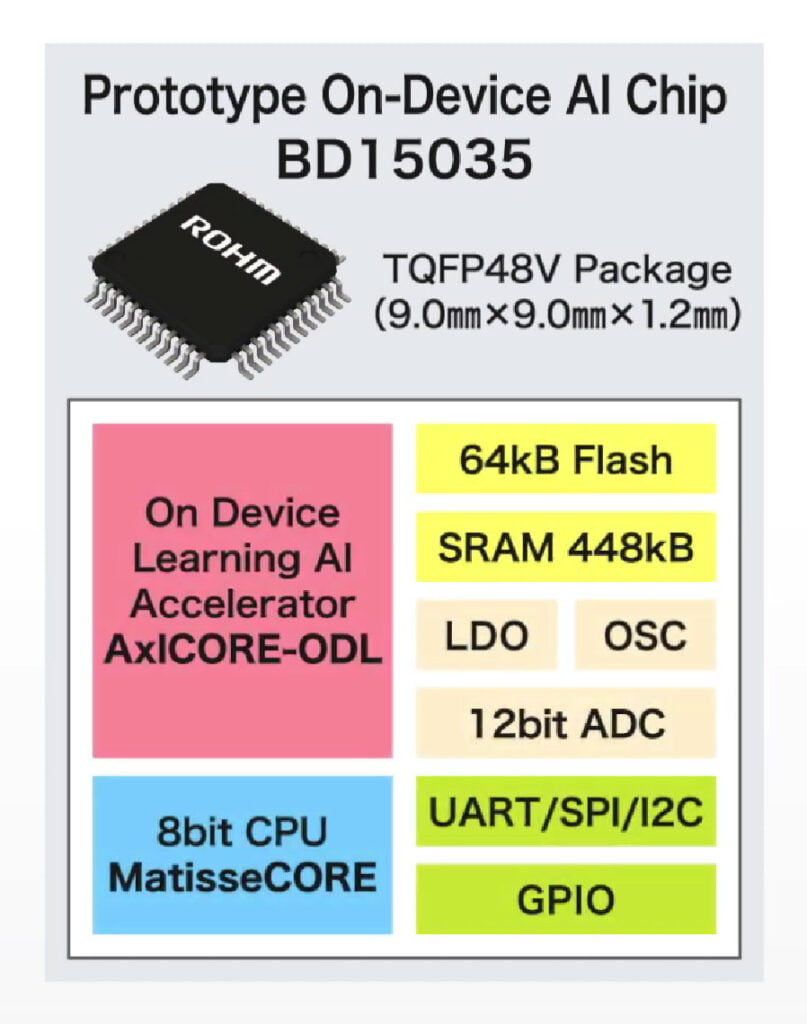

The BD15035 is a prototype-stage SoC (System-on-Chip) with an on-device-learning AI accelerator intended for edge-AI applications. The AI part of the chip is a three-layer neural network called AxICORE developed by Prof. Hiroki Matsutani from Dept. of Information and Computer Science, Keio University, Japan. This AI accelerator core consists of only 20000 gates which makes it low-cost when commercialized. Accompanied by AxICORE is an efficient 8-bit tinyMicon MatisseCORE processor core developed by ROHM. The main features of the processor core as per ROHM are,

- Industry’s highest-performing 8-bit MCU.

- Flexible configuration of AI models

- Real-time debugging.

- Compliance with automotive standard ASIL-D.

In addition, the BD15035 comes with 64 KB flash, 448 KB SRAM, 12-bit ADC, and other communication and GPIO interfaces. The chip is available in a 9 x 9 x 1.2 mm TQFP48V package.

With the combination of an ultra-compact AI accelerator and a high-performance CPU, the BD15035 can do on-device learning and inferencing with just a few tens of milliwatts of power, which is around 1000 times smaller than currently existing solutions. The power consumption figure is a ballpark only and the actual figure will be application dependant. With efficient hardware NN, the chip can run moderately complex real-time AI applications such as predictive maintenance in an industrial environment. It works by producing an anomaly score by taking inputs from the environment. The main advantage of running AI at the edge is that it doesn’t require a communication link to a cloud server doing the heavy lifting. This simplifies product development and deployment. ROHM plans to start commercial production some time 2023 with a focus on tailored products for motors and real-time sensors.

Evaluation & Development

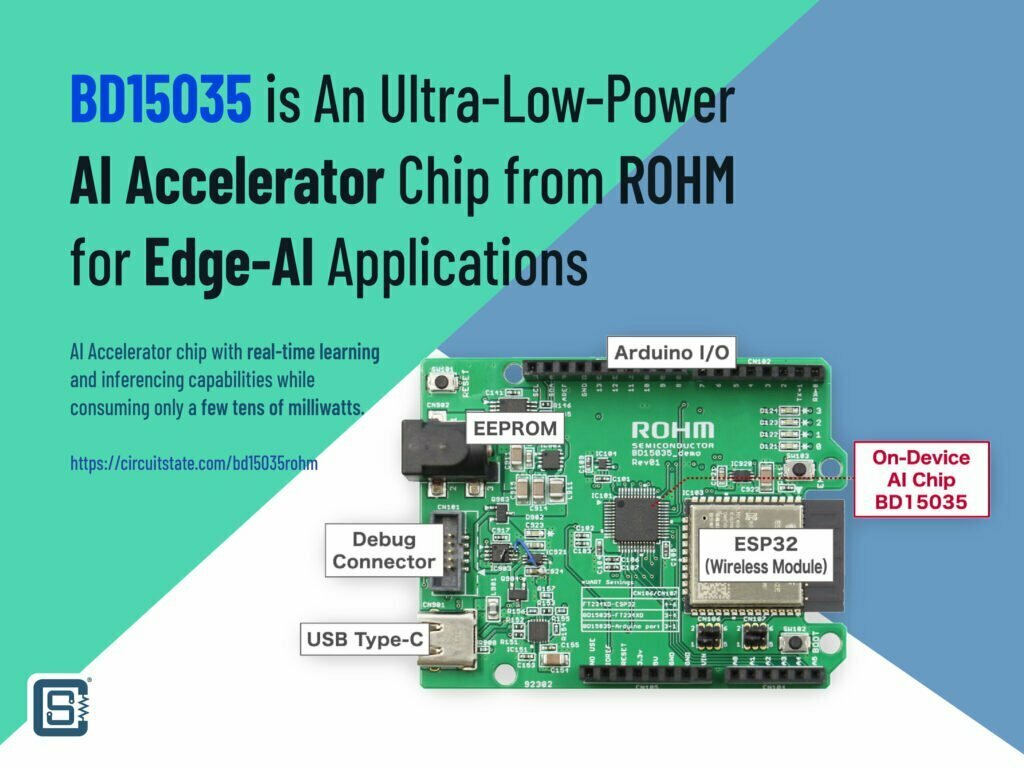

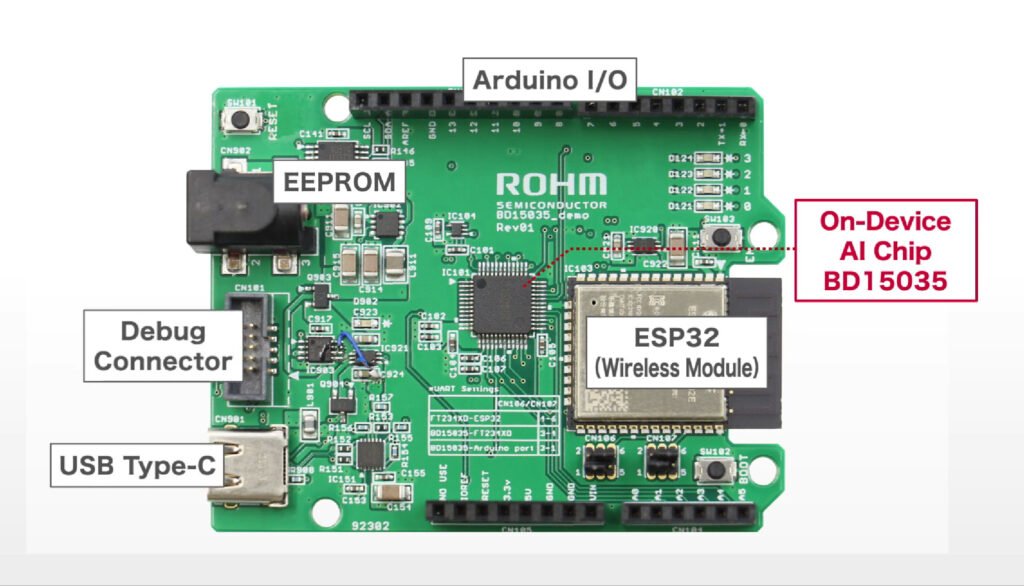

To help with the evaluation of BD15035, ROHM has created an evaluation board compatible with Arduino Uno. The board also combines an ESP32 module for connectivity. In their latest video, ROHM shows the board collects data from an accelerometer placed on a running fan. By simulating different conditions, BD15035 is able to predict anomalies (events that are different from the expected types) and send the data to a computer.

Links

- Official announcement – ROHM Develops Ultra-Low-Power On-Device Learning Edge AI Chip AI at the edge enables real-time failure prediction without the cloud server required

- Introduction to ROHM’s On-Device Learning AI Chip [PDF]

Short Link

- Short URL to this page – https://circuitstate.com/bd15035rohm